How to use the LINEST function

What is the LINEST function?

The LINEST function returns an array of values representing the parameters of a straight line based on the "least squares" method namely the SLOPE and Intercept values. The LINEST function also lets you combine other functions in order to calculate polynomial, logarithmic, exponential and power series. This function must be entered as an array formula.

The LINEST function may also calculate the following:

- Std error (slope)

- R2

- F statistic

- RSS (Regression sum of squares)

- Std error for intercept

- Std error for the y value

- Degrees of freedom

- SSR (Residual sum of squares)

Table of Contents

1. Introduction

What is the least square method?

The least squares method is a standard approach to fitting regression lines and curves to data by minimizing the sum of the squares of the residuals. It finds the "best" line according to this squared error criterion.

Related functions

| Function | Description |

|---|---|

| LINEST(known_y's, [known_x's], [const], [stats]) | Returns statistics for a linear trendline fit to the data |

| SLOPE(known_y's, known_x's) | Returns slope of linear regression line |

| INTERCEPT(known_y's, known_x's) | Returns y-intercept of linear regression line |

| TREND(known_y's, [known_x's], [new_x's], [const]) | Returns predicted y values for a linear trend |

2. Syntax

LINEST(known_y's, [known_x's], [const], [stats])

3. Arguments

y = mx + b is the function used to calculate the straight line that fits the data best using "least squares" method.

| known_y's | Required. Single column - Each row is a separate variable. Single row - Each column is a separate variable. |

| [known_x's] | Optional. Known x points, default values are 1, 2, 3, ... |

| [const] | Optional. A boolean value determining if constant b is equal to 0 (zero). TRUE - constant b is calculated. Default. FALSE - constant b is 0 (zero). |

| [stats] | Optional. A boolean value determining whether to calculate additional regression statistics. TRUE - Returns additional regression statistics. {mn, mn-1, ..., m1, b;sen, sen-1, ..., se1, seb;r2, sey;F, df;ssreg, ssresid} FALSE - returns only m and b. |

The following table shows what the LINEST function returns if [stats] argument is TRUE.

| Statistic | Description |

| se1, se2, ..., sen | The standard error values. |

| seb | The standard error value for the constant b. seb returns #N/A when const argument is FALSE. |

| r2 | The coefficient of determination. A perfect correlation is 1 and 0 (zero) means no correlation based on comparing the actual and the LINEST functions estimated y-values. |

| sey | The standard error for the estimated y-values. |

| F | The F statistic, or the F-observed value. Determines if the observed relationship between the dependent and independent variables occurs by chance. |

| df | The degrees of freedom assists you in finding F-critical values, then compare the values to the F statistic to get the confidence level for the model. |

| ssreg | The regression sum of squares. |

| ssresid | The residual sum of squares. |

What is the R-square value?

The R-square value indicates the goodness of fit, with a value of 1 indicating a perfect fit.

What are the F-statistic and degrees of freedom?

The F-statistic and degrees of freedom provided by the LINEST function are used to perform a hypothesis test to assess the overall significance of the regression model.

The F-statistic is a measure of how well the regression model fits the data compared to a model with no predictor variables. A larger F-statistic indicates that the regression model is a better fit than a model with no predictors.

The degrees of freedom represent the number of independent pieces of information used to calculate the statistic. In the case of the LINEST function, the degrees of freedom represent:

- Degrees of freedom for the regression: This is the number of predictor variables in the model.

- Degrees of freedom for the residuals: This is the number of data points minus the number of parameters estimated (including the intercept if included).

Together, the F-statistic and the degrees of freedom are used to calculate a p-value, which determines the statistical significance of the regression model.

A low p-value (typically less than 0.05) indicates that the regression model is statistically significant, meaning that at least one of the predictor variables has a significant relationship with the dependent variable.

On the other hand, a high p-value (greater than 0.05) suggests that the regression model is not statistically significant, and the predictor variables do not have a significant relationship with the dependent variable.

By evaluating the p-value based on the F-statistic and degrees of freedom, you can determine whether the regression model is reliable for making predictions or if the predictor variables are not useful for explaining the variation in the dependent variable.

The F-test value from the LINEST function differs from the FTEST function:

- F-test (LINEST function): F statistic

- FTEST function: probability

What is the the regression sum of squares?

The regression sum of squares (RSS) is a measure of the discrepancy between the data and the estimated regression line. It represents the sum of the squared differences between the observed values of the dependent variable and the values predicted by the regression line.

RSS = Σ(ŷi - ymean)2

What is the the regression sum of squares?

The residual sum of squares (RSS or SSR) is a measure of the discrepancy between the observed values of the dependent variable and the values predicted by the regression model. It represents the sum of the squared residuals, where a residual is the difference between an observed value and its corresponding predicted value from the regression line.

SSR = Σ(yi - ŷi)2

- yi is the observed value of the dependent variable for observation i

- ŷi is the predicted value of the dependent variable for observation i, using the estimated regression line

4. Example

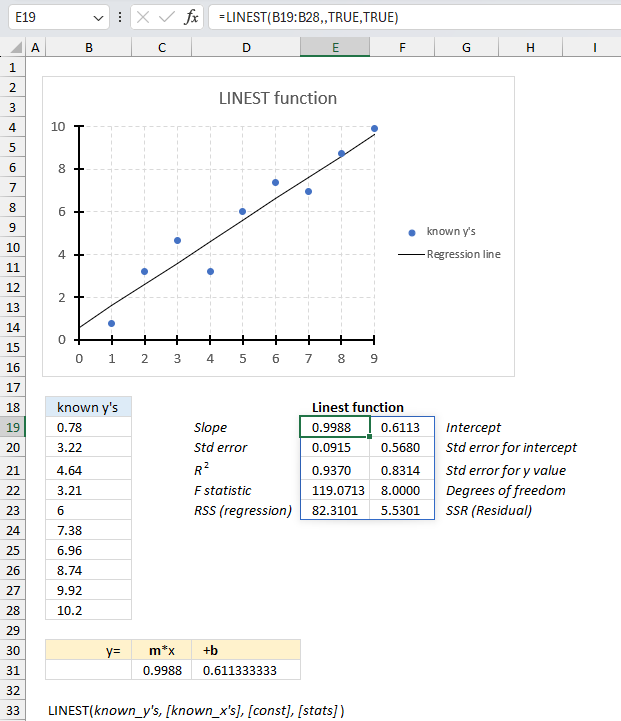

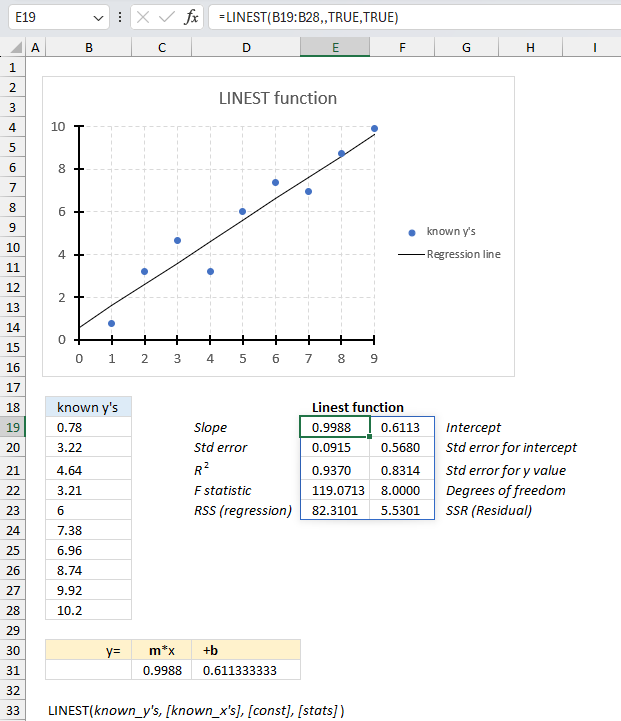

Array formula in cell range C31:D31:

To enter an array formula, type the formula in a cell then press and hold CTRL + SHIFT simultaneously, now press Enter once. Release all keys.

The formula bar now shows the formula with a beginning and ending curly bracket telling you that you entered the formula successfully. Don't enter the curly brackets yourself.

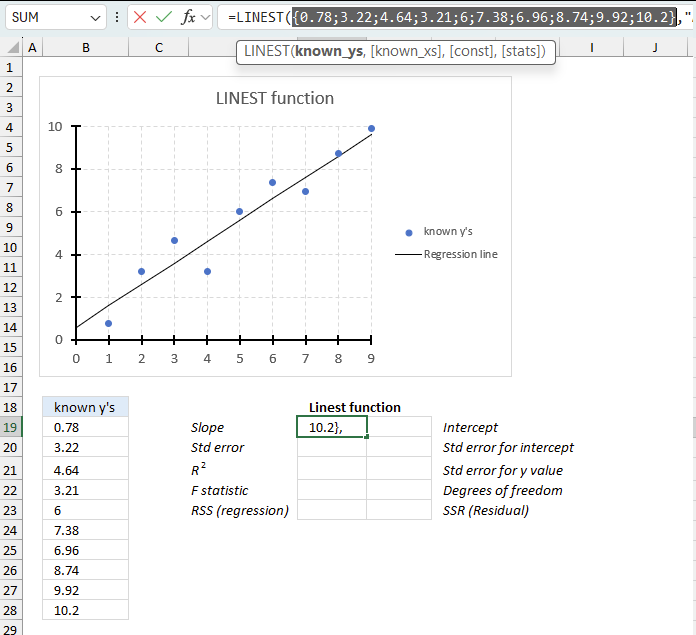

Array formula in cell range E19:F23:

The image shows a straight line based on the m and b parameters that the LINEST function calculated in cell C31 and D31.

The blue dots are the known y's in column B in the top image.

The SLOPE and INTERCEPT function lets you also find the parameters m and b needed to describe the straight line function.

y = mx + b

The TREND function calculates the y and x-values needed to plot the straight line function and the growth function for exponential curves.

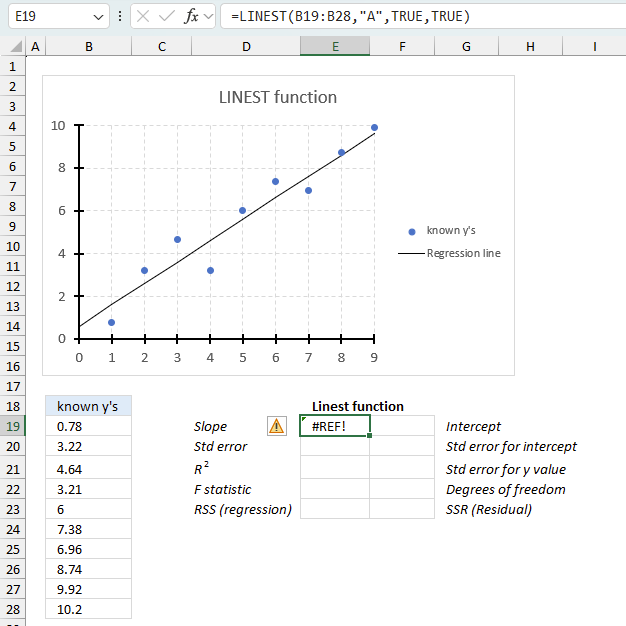

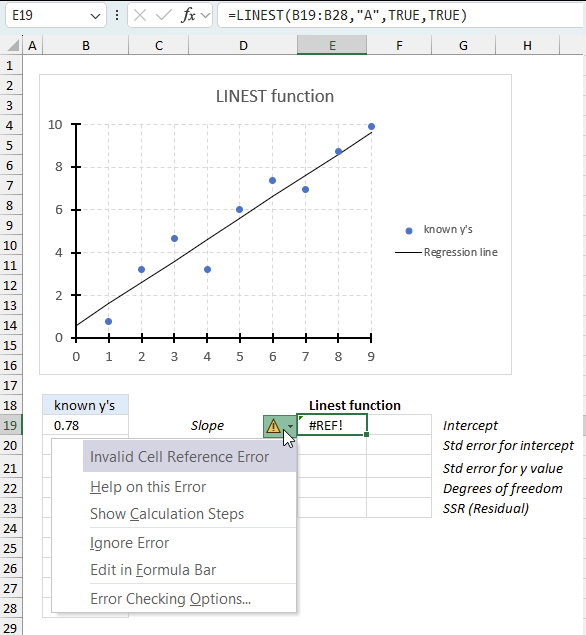

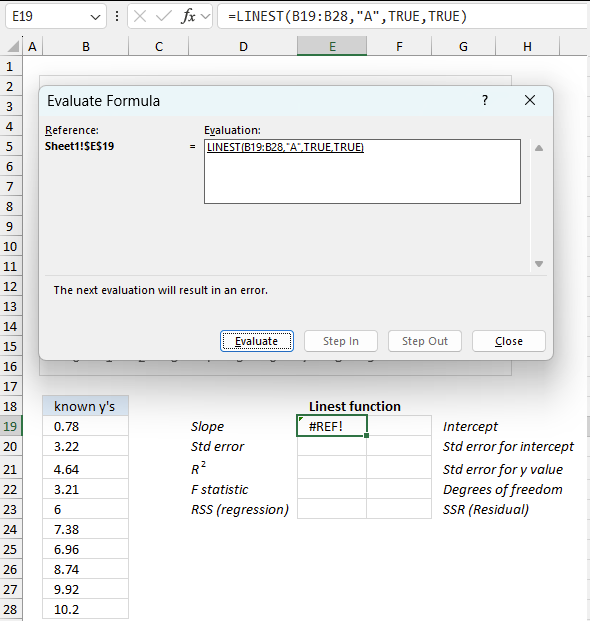

5. Function not working

The LINEST function returns

- #REF! error if you use a non-numeric input value.

- #NAME? error if you misspell the function name.

- propagates errors, meaning that if the input contains an error (e.g., #VALUE!, #REF!), the function will return the same error.

7.1 Troubleshooting the error value

When you encounter an error value in a cell a warning symbol appears, displayed in the image above. Press with mouse on it to see a pop-up menu that lets you get more information about the error.

- The first line describes the error if you press with left mouse button on it.

- The second line opens a pane that explains the error in greater detail.

- The third line takes you to the "Evaluate Formula" tool, a dialog box appears allowing you to examine the formula in greater detail.

- This line lets you ignore the error value meaning the warning icon disappears, however, the error is still in the cell.

- The fifth line lets you edit the formula in the Formula bar.

- The sixth line opens the Excel settings so you can adjust the Error Checking Options.

Here are a few of the most common Excel errors you may encounter.

#NULL error - This error occurs most often if you by mistake use a space character in a formula where it shouldn't be. Excel interprets a space character as an intersection operator. If the ranges don't intersect an #NULL error is returned. The #NULL! error occurs when a formula attempts to calculate the intersection of two ranges that do not actually intersect. This can happen when the wrong range operator is used in the formula, or when the intersection operator (represented by a space character) is used between two ranges that do not overlap. To fix this error double check that the ranges referenced in the formula that use the intersection operator actually have cells in common.

#SPILL error - The #SPILL! error occurs only in version Excel 365 and is caused by a dynamic array being to large, meaning there are cells below and/or to the right that are not empty. This prevents the dynamic array formula expanding into new empty cells.

#DIV/0 error - This error happens if you try to divide a number by 0 (zero) or a value that equates to zero which is not possible mathematically.

#VALUE error - The #VALUE error occurs when a formula has a value that is of the wrong data type. Such as text where a number is expected or when dates are evaluated as text.

#REF error - The #REF error happens when a cell reference is invalid. This can happen if a cell is deleted that is referenced by a formula.

#NAME error - The #NAME error happens if you misspelled a function or a named range.

#NUM error - The #NUM error shows up when you try to use invalid numeric values in formulas, like square root of a negative number.

#N/A error - The #N/A error happens when a value is not available for a formula or found in a given cell range, for example in the VLOOKUP or MATCH functions.

#GETTING_DATA error - The #GETTING_DATA error shows while external sources are loading, this can indicate a delay in fetching the data or that the external source is unavailable right now.

7.2 The formula returns an unexpected value

To understand why a formula returns an unexpected value we need to examine the calculations steps in detail. Luckily, Excel has a tool that is really handy in these situations. Here is how to troubleshoot a formula:

- Select the cell containing the formula you want to examine in detail.

- Go to tab “Formulas” on the ribbon.

- Press with left mouse button on "Evaluate Formula" button. A dialog box appears.

The formula appears in a white field inside the dialog box. Underlined expressions are calculations being processed in the next step. The italicized expression is the most recent result. The buttons at the bottom of the dialog box allows you to evaluate the formula in smaller calculations which you control. - Press with left mouse button on the "Evaluate" button located at the bottom of the dialog box to process the underlined expression.

- Repeat pressing the "Evaluate" button until you have seen all calculations step by step. This allows you to examine the formula in greater detail and hopefully find the culprit.

- Press "Close" button to dismiss the dialog box.

There is also another way to debug formulas using the function key F9. F9 is especially useful if you have a feeling that a specific part of the formula is the issue, this makes it faster than the "Evaluate Formula" tool since you don't need to go through all calculations to find the issue..

- Enter Edit mode: Double-press with left mouse button on the cell or press F2 to enter Edit mode for the formula.

- Select part of the formula: Highlight the specific part of the formula you want to evaluate. You can select and evaluate any part of the formula that could work as a standalone formula.

- Press F9: This will calculate and display the result of just that selected portion.

- Evaluate step-by-step: You can select and evaluate different parts of the formula to see intermediate results.

- Check for errors: This allows you to pinpoint which part of a complex formula may be causing an error.

The image above shows cell reference B19:B28 converted to hard-coded value using the F9 key.

Tips!

- View actual values: Selecting a cell reference and pressing F9 will show the actual values in those cells.

- Exit safely: Press Esc to exit Edit mode without changing the formula. Don't press Enter, as that would replace the formula part with the calculated value.

- Full recalculation: Pressing F9 outside of Edit mode will recalculate all formulas in the workbook.

Remember to be careful not to accidentally overwrite parts of your formula when using F9. Always exit with Esc rather than Enter to preserve the original formula. However, if you make a mistake overwriting the formula it is not the end of the world. You can “undo” the action by pressing keyboard shortcut keys CTRL + z or pressing the “Undo” button

7.3 Other errors

Floating-point arithmetic may give inaccurate results in Excel - Article

Floating-point errors are usually very small, often beyond the 15th decimal place, and in most cases don't affect calculations significantly.

Functions in 'Statistical' category

The LINEST function function is one of 73 functions in the 'Statistical' category.

How to comment

How to add a formula to your comment

<code>Insert your formula here.</code>

Convert less than and larger than signs

Use html character entities instead of less than and larger than signs.

< becomes < and > becomes >

How to add VBA code to your comment

[vb 1="vbnet" language=","]

Put your VBA code here.

[/vb]

How to add a picture to your comment:

Upload picture to postimage.org or imgur

Paste image link to your comment.

Contact Oscar

You can contact me through this contact form